Euclidean Distance Analysis Is the New Baseline for Verifying Face-Swap Videos

Euclidean distance analysis provides a practical baseline for detecting modern face-swap deepfakes by comparing biometric landmarks across video frames.

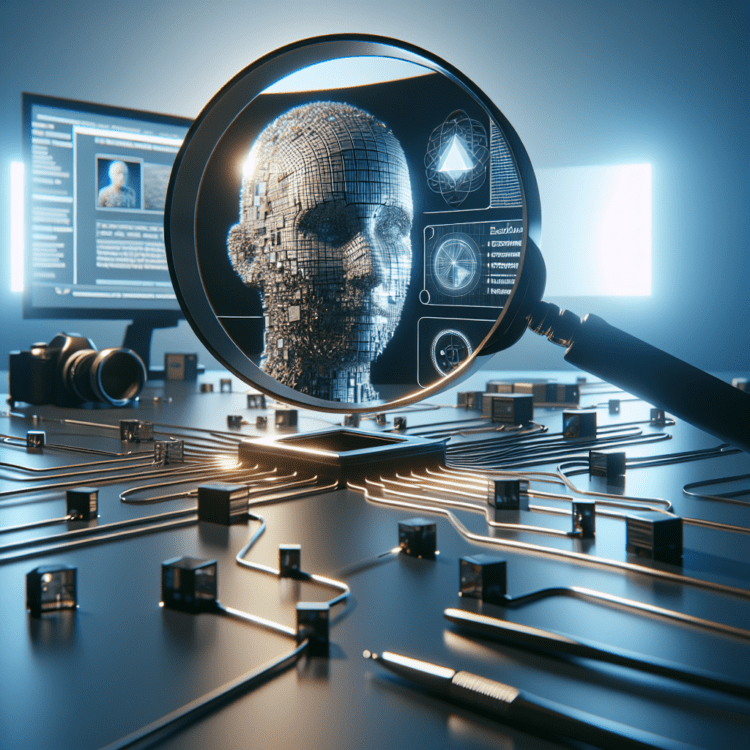

Why visual evidence is under renewed threat

The rise of zero-cost, unlimited face-swap video tools has pushed high-fidelity identity substitution out of the realm of specialists and into the hands of anyone with a webcam and an internet connection. That shift matters for developers, investigators, and organizations that rely on video as documentary evidence: the integrity of a single frame no longer guarantees the identity of the person it appears to show. In response, verification work is migrating away from single-frame spatial checks toward methods that measure geometric and temporal consistency across a video—and Euclidean distance analysis of facial landmarks has emerged from this change as a pragmatic, explainable baseline for determining whether a claimant in a clip truly matches a verified reference photo.

How modern face-swapping changes detection

Early deepfakes were often detectable by spatial artifacts visible in individual frames: jagged hairlines, mismatched lighting, or subtle double-edges around facial features. Those cues were useful because they let classifiers and hand-crafted detectors catch inconsistencies inside a static image. According to recent reporting and practitioner experience, contemporary face-swap algorithms no longer simply paste a new texture over existing pixels. Instead, they map a source identity onto the motion and lighting envelope of an existing target video, preserving the target’s dynamics while recalculating skin tone and texture per frame. That technical change moves the reliable signals of manipulation out of the spatial domain and into the temporal and biomechanical domains—how a face moves, the relative geometry of landmarks over time, and biologically constrained ratios that should remain stable for a given person.

Euclidean distance analysis as a forensic baseline

For verification pipelines and investigative tooling, this evolution implies a change in what “same person” means algorithmically. Rather than merely training a classifier to output a "deepfake" probability, forensic systems can compute Euclidean distances between biometric landmarks extracted from the video and those from a verified reference image. Euclidean distance—the straight-line distance between corresponding points in multi-dimensional feature space—lets engineers quantify geometric deviation frame by frame and across a sequence. When applied in batch to dozens or hundreds of frames, the technique surfaces whether the geometric signature of the subject in the clip drifts beyond a narrow threshold relative to the reference. If these deviations are pronounced or biologically implausible during natural head turns or expressions, that presents measurable evidence that the on-screen identity does not match the claimed person.

What explainable evidence looks like

Explainability is a practical necessity in investigative contexts. A numeric confidence score from a black-box model may be insufficient for case work or court submissions; investigators and auditors need interpretable artifacts that link measurement to claim. Tools built around Euclidean analysis can output structured, visual reports that show how specific landmarks deviate over time. Examples of actionable metrics include changes in inter-pupillary distance, variation in the ratio between the nasal bridge and mouth corners, and frame-by-frame landmark trajectories during motion. If a head pan causes a landmark ratio to change in a way that would be biologically impossible for the reference subject, that pattern can be presented as quantifiable, visual evidence rather than an opaque label. For forensic practitioners, this form of documentation—numbers coupled with visualizations—helps bridge technical analysis and legal or journalistic reporting needs.

Who benefits from accessible geometric verification

Historically, high-fidelity forensic tooling has been costly and gated: enterprise-grade platforms that package explainable analysis and reporting often arrive with five-figure contracts and enterprise procurement cycles. The recent democratization of core algorithms and the availability of lower-cost platforms change that dynamic. The same geometric techniques that power expensive suites can now be provided in lighter-weight form on accessible platforms, allowing solo investigators, small private firms, newsrooms, and mid-sized organizations to apply Euclidean landmark analysis without enterprise budgets. That levelling of access means more actors can ground visual evidence in data rather than intuition, and investigative workflows become less dependent on a few specialized vendors.

Designing a verification pipeline around temporal consistency

Practitioners building verification systems must shift the focus from high-resolution, spatial artifact hunting to consistency checks that span time. A pragmatic pipeline looks like this in principle, each stage aligned with the constraints laid out by current best practices:

- Landmark extraction: Use a reliable facial landmark detector to produce consistent keypoints on every frame of a clip.

- Reference registration: Derive a biometric signature from a verified reference photo—normalized for pose and scale—against which to compare video frames.

- Euclidean scoring: Compute per-frame Euclidean distances between corresponding landmarks, then aggregate these distances across windows or the full sequence to detect drift.

- Biological plausibility checks: Translate landmark motion into ratios and distances—inter-pupillary distances, nasal-to-mouth ratios, relative eyebrow curvature—and flag changes that contradict expected human anatomy or consistent subject geometry.

- Explainable reporting: Produce plots and annotated frames that illustrate where and when deviations occur, supplemented by aggregated statistics for summary.

These components address what verification does and how it works without invoking proprietary methods or making unverified performance claims. They align directly with the need to quantify temporal consistency rather than rely solely on spatial artifact detection.

Model choices and temporal analysis considerations

Sequence modeling remains part of the toolkit for temporal anomaly detection. The source discussion explicitly mentions sequence models such as LSTMs as one way to find cross-frame anomalies, contrasting them with detectors that still focus primarily on static spatial artifacts. Sequence models can learn temporal patterns and detect unusual transitions, while Euclidean analysis provides a simple, deterministic geometric baseline that yields interpretable outputs. Both approaches can complement each other: sequence models detect anomalous temporal dynamics, and landmark-based Euclidean scoring converts those dynamics into human-interpretable metrics. Importantly, the emphasis in investigative contexts is on transparency—so architectures that can be inspected and whose outputs can be traced to landmark motion are favored for evidentiary uses.

Costs, accessibility, and the investigator’s toolkit

The market’s bifurcation—costly enterprise forensic suites on one side and freely available face-swap generators on the other—creates asymmetry in how evidence is produced and contested. The democratization of verification algorithms narrows that asymmetry by enabling lower-cost implementations of landmark comparison and reporting. For smaller shops, lighter-weight Euclidean analysis services can provide immediate, defensible assessments of whether a clip’s geometric behavior matches a verified subject. That said, the article does not claim parity in functionality or exhaustive feature sets between inexpensive platforms and enterprise solutions; rather, it reflects the observation that basic, high-value geometric checks are now within broader reach.

Investigative practice: what to present and how to interpret it

In investigative workflows, analysts are advised to treat Euclidean measures as indicators rather than absolute adjudicators. A pipeline that highlights landmark deviations should be accompanied by context: the reference photo’s pose and expression, video compression artifacts, occlusions, and the conditions under which landmarks were extracted. Presenting time-series plots of per-landmark Euclidean distances alongside annotated frames allows nontechnical reviewers to see both the numeric deviation and the visual frame where it occurs. Where deviations are large and coincide with natural motion that should not alter biometric ratios, the evidence for an identity mismatch grows stronger. Conversely, small deviations in isolated frames—especially under heavy compression—warrant cautious interpretation.

Broader implications for developers and businesses

The migration from spatial to temporal verification has implications beyond forensic labs. Developers working in computer vision and biometrics must revise evaluation criteria and dataset design to emphasize temporal consistency and biomechanical plausibility. Product teams that build identity verification, content moderation, or automated evidence curation will need to incorporate longitudinal checks into their pipelines. For businesses that handle user-generated video, the shift also affects risk models: a single visual match may no longer suffice for sensitive actions such as account recovery or legal substantiation without temporal-proofing. The rise of accessible geometric analysis tools changes market dynamics, enabling more organizations to operationalize these checks while also making adversaries’ job of counterfeiting identities easier at scale—an industry balance that will shape investment in detection, auditability, and policy.

Limitations and open questions

Conservative practice dictates that Euclidean landmark analysis be presented as one part of a multi-evidence approach. The source material notes practical constraints—including the need for explainability and the changing capabilities of synthesis tools—but does not provide performance metrics, false-positive rates, or specific thresholds for decision-making. Those are implementation decisions that must be validated externally. Likewise, while sequence models like LSTMs are mentioned as an option for temporal anomaly detection, the source does not prescribe a single superior architecture; rather, it frames Euclidean analysis as a principled baseline that is easy to interpret and integrate into reporting workflows.

Practical reader guidance

What the technique does: Euclidean distance analysis measures geometric deviations between facial landmarks in video frames and a verified reference image, producing per-frame and aggregated scores that expose temporal inconsistency.

How it works in brief: Landmarks are extracted, normalized for scale and pose, and then corresponding points are compared using straight-line distances in feature space; aggregated deviations are used to decide whether the filmed subject retains a stable biometric signature.

Why it matters: Modern face-swapping algorithms preserve motion and lighting from a target clip while altering identity, which undermines single-frame spatial checks; geometric and temporal checks detect identity-level inconsistencies that are harder for current swap tools to mask.

Who can use it: Developers of verification pipelines, computer vision engineers, digital forensic teams, private investigators, and newsrooms can all incorporate landmark-based Euclidean checks; the recent democratization of algorithms lowers the barrier for smaller teams.

When it’s usable: As reported, accessible implementations already exist in lighter-weight platforms, making high-level Euclidean analysis available today at lower cost than legacy enterprise suites.

Standards for reporting and evidentiary use

In contexts where video analysis may inform casework or legal processes, the emphasis is on traceability: every numeric claim should be tied to the landmarks and frames that produced it. Visualizations, annotated frames, and a clear description of the reference’s provenance strengthen the credibility of a report. The source material stresses that an opaque “98% deepfake” label is insufficient for case analysis; investigators require structured outputs that can be independently inspected and explained.

Looking forward, geometric and temporal verification will likely remain central to investigative practice as synthesis tools continue to evolve. The immediate priority for developers and organizations is to adopt methods that produce interpretable, reproducible evidence—approaches such as Euclidean distance analysis answer that need by turning landmark motion into quantifiable, visual artifacts. As tooling becomes more accessible, expect verification to shift from a specialist activity to a more routine part of editorial, legal, and security workflows, with continued attention on reproducibility, validation of thresholds, and integration with sequence models and other temporal detectors.