StaticCameraManager’s Heuristic Scoring Engine Picks Optimal Unity Camera Viewpoints for Action Recognition

StaticCameraManager uses a heuristic scoring algorithm—visibility, angle, and distance—to select the best Unity camera node for clear action recognition.

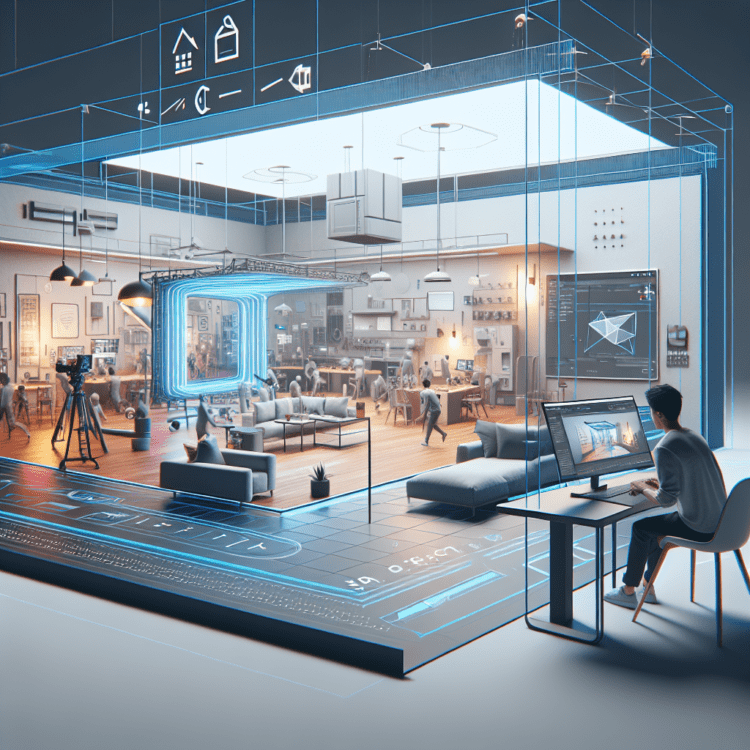

The StaticCameraManager introduces a deterministic, physics-aware approach to choosing where a virtual or robot-mounted camera should “stand” inside a simulated room to capture the most useful visual data. Rather than selecting nodes at random from a set of pre-placed viewpoints, the system computes a composite quality score for each candidate and picks the highest-ranked node. That heuristic scoring algorithm matters because it converts intuitive rules of framing and occlusion into a repeatable pipeline that improves downstream visual models, reduces wasted captures, and simplifies integration with analytics or AI back ends.

Why deterministic viewpoint selection changes the capture pipeline

In environments where multiple virtual camera nodes exist—often four or more in a room—deciding which position yields the clearest, most semantically rich view is nontrivial. Occlusion by furniture, poor facing direction relative to the subject, and excessive distance all diminish the utility of a frame for tasks such as action recognition, visual-language model (VLM) grounding, or activity logging. StaticCameraManager tackles this by assigning each node a numeric score derived from three physical constraints: visibility (line-of-sight), angular alignment with the subject, and a distance-based quality factor. Bringing these constraints together into one weighted score lets the capture system prioritize practical data quality over aesthetic or arbitrary choices.

How the scoring formula balances competing constraints

The system computes a final score for each node with a weighted sum of three normalized sub-scores:

FinalScore = 0.5 × Visibility + 0.3 × AngleFactor + 0.2 × DistanceFactor

Visibility carries the largest weight because a camera with a perfect angle and ideal distance is useless if the subject is occluded. AngleFactor rewards viewpoints that place the subject near the center of the camera’s field of view (FOV), improving semantic clarity for gestures and interactions. DistanceFactor favors nodes that keep the subject within a practical capture range so that critical details—hand-to-object interactions, facial expressions—remain visible at pixel level.

Visibility: line-of-sight checks as a gatekeeper

The first step is a binary visibility test: does the node have an unobstructed line from its position to the subject’s aim point? In practice this is implemented with a physics linecast (or equivalent raycast) that checks whether any collider intervenes between the node and the target. If the ray hits any object that is not the subject (or not a child of the subject’s transform), the node is treated as occluded.

Treating visibility as a hard gate prevents nodes that are partially or fully blocked by walls, furniture, or props from being chosen, regardless of how favorable other metrics might look. This is particularly important in real-world-analog simulations where occluders are abundant and action semantics depend on unobstructed viewpoints. Engineers should also account for partial occlusions (for example, a hand partially hidden behind a shoulder) by either preserving a binary pass/fail or by computing a graded visibility value derived from multiple sample rays across the subject’s bounding box.

AngleFactor: favoring views that reveal semantics

Once a node passes the visibility check, the algorithm evaluates angular alignment. For many recognition tasks, front and side views expose arm movement, object interactions, and facial cues more reliably than rear views. StaticCameraManager normalizes the subject-to-camera angle relative to the camera’s FOV center so that nodes closer to the central viewing axis score higher.

Concretely, the system computes the angular offset between the camera’s principal axis and the vector pointing at the subject, then converts that offset into a normalized factor capped between 0 and 1. A smaller offset (subject near the center of view) yields a higher AngleFactor. This approach gives the scoring engine semantic sensitivity: two visible nodes may both see a drinking motion, but the one that shows the hand-to-mouth relationship more clearly will score better and therefore be selected.

DistanceFactor: staying within the golden range

Distance affects the level of detail available in an image. To prevent low-resolution captures that hamper VLMs and pose estimators, StaticCameraManager prioritizes nodes that keep the subject in a “golden range” of proximity. The implementation uses a linear decay so that quality declines smoothly with increasing distance; for instance, the system may normalize a maximum useful distance (10 meters) and map shorter distances to higher scores.

In practice, designers often define an ideal working band—2 to 5 meters in many indoor scenarios—for preserving pixel density while retaining scene context. Nodes closer to the subject within this band are preferred; those that drift beyond the maximum threshold see their DistanceFactor reduced, lowering their final score. For fine-grained tasks like typing detection or small-object manipulation, designers can shrink the golden band and increase the distance weight to bias selection toward closer vantage points.

Case study — choosing the best view for a drinking gesture

Consider two visible nodes capturing a user who is taking a sip from a bottle. Candidate A is a side-back view: line-of-sight is clear, but the hand-to-mouth motion is partially obscured by the shoulder. Candidate B is a side-front view that shows the bottle and the hand trajectory cleanly. Even if both nodes have similar distances, Candidate B will produce a higher AngleFactor, and because visibility is equal, its higher composite score will make it the chosen node. This preserves semantic clarity for models that must detect and timestamp the drinking action.

Case study — capturing keyboard interactions at a desk

At a desk, the difference between a successful capture and a noisy one often comes down to distance and angle. A node that frames the subject at a medium distance with a high-angle perspective often reveals both the hands and keyboard layout. A node that is too far maintains angle but loses pixel detail on finger positions, reducing the DistanceFactor. The heuristic quickly favors the node that balances proximity and framing to maximize the final score, ensuring keyboard interactions are captured with sufficient semantic detail for typing inference or productivity analytics.

Debugging and visualization with gizmos

To make tuning intuitive for engineers, StaticCameraManager includes a visualization layer that renders node status in the editor. Nodes are color-coded: green indicates a high composite score (ready for capture), while red or grey flags nodes that are either out of FOV, occluded, or below a usability threshold. This immediate visual feedback enables iterative refinement of thresholds, FOV parameters, and the golden range without the need to run full data collection cycles.

Rendering these cues also surfaces edge cases: nodes that are marginally visible but consistently low-scoring, or nodes that are within distance bounds but continuously outside the effective FOV. Combining gizmo output with live scoring logs helps teams decide whether to move nodes, adjust weightings in the heuristic, or change the subject’s aim point used for angle calculations.

Implementation choices and trade-offs

Designing a practical scoring engine involves several trade-offs:

- Binary vs. graded visibility: A binary pass/fail is simple and robust but discards nodes that might be usable with partial visibility. Sampling across the subject’s bounding volume produces a graded visibility score that can sometimes rescue borderline nodes but adds computational cost.

- Static weights vs. adaptive weighting: The 0.5/0.3/0.2 weighting prioritizes visibility predictably, but adaptive schemes can re-weight factors depending on the detection task (e.g., increase distance weight for small-object tracking). Adaptive weighting requires more meta-management but can yield better task-specific performance.

- Rate of switching and temporal stability: Teleporting between nodes or switching capture viewpoints impulsively can confuse temporal models and downstream analytics. Adding hysteresis—requiring sustained superiority in score before switching—or smoothing scores over a short time window prevents jitter.

- Performance considerations: Linecasts and multiple angle/distance computations per frame can be expensive in large scenes. Optimizations include culling distant nodes, running the full scoring pass at lower frequency, or performing expensive checks only when triggered by significant subject movement.

Integration with AI pipelines and developer tooling

Once the best viewpoint is selected, the capture system feeds frames into an AI backend—often a visual-language model, pose estimator, or activity recognizer. The StaticCameraManager’s deterministic selection reduces variance in training data by biasing capture toward frames that are rich in semantic detail. This has downstream benefits for labeled-data efficiency, model convergence, and inference accuracy.

On the tooling side, the manager sits naturally alongside developer platforms and automation stacks. It can be part of a Unity-based simulation harness feeding synthetic datasets into a model training pipeline, or it can operate on-edge in a robotics stack alongside ROS, sending selected frames to a cloud API for inference. Phrases like “viewpoint selection,” “camera node management,” and “scene capture pipeline” are natural internal-link candidates that connect this logic to related topics such as synthetic data generation, dataset curation, and camera calibration.

Business use cases and operational considerations

Practical deployments range from research simulations that generate labeled action datasets to production systems for smart buildings and retail analytics. For example:

- Robotics: A mobile robot using a set of fixed virtual camera nodes to decide where to pause and capture before sending images to a perception stack.

- Smart spaces: Environmental monitoring systems that select the most informative surveillance camera feed for event detection, reducing unnecessary bandwidth usage.

- UX analytics: Capturing user interactions at kiosks or ATMs where the system must show hands and facial orientation to infer intent without recording redundant or low-value footage.

Operationally, teams must also weigh privacy and compliance concerns. Deterministic selection reduces redundant capture, which can help keep storage and processing costs down, but it does not remove the need for consent mechanisms, anonymization, and secure transmission—especially when frames leave local infrastructure and flow to cloud-based AI services.

Tuning thresholds and testing strategies

Effective tuning requires a mix of automated metrics and human-in-the-loop evaluation. Recommended steps:

- Start with the base weights (0.5 visibility / 0.3 angle / 0.2 distance) and collect representative scenes.

- Evaluate downstream model performance (precision/recall for actions) against a labeled validation set.

- Adjust weights for task-specific sensitivity—raise AngleFactor for gesture-heavy tasks, increase DistanceFactor for small-object manipulation.

- Use simulation-based A/B testing to measure how changes affect capture frequency, bandwidth, and model quality.

- Monitor switching behavior to ensure temporal consistency; apply smoothing or minimum dwell times to avoid oscillation.

Extending the model: occlusion modeling, multi-node fusion, and prediction

Several natural extensions can make viewpoint selection even more powerful:

- Occlusion modeling: Rather than a single linecast, use multiple rays or volumetric occlusion checks to compute a graded visibility that accounts for partial obstruction.

- Multi-node fusion: Instead of picking one node, capture from the top two nodes and fuse frames or features. This is useful when a single perspective cannot capture complementary details.

- Predictive selection: Use lightweight motion models to anticipate where a subject will move next and pre-position the virtual camera node to reduce latency and missed actions.

These enhancements increase complexity but open doors to richer datasets and more resilient capture in dynamic scenes.

Security, privacy, and deployment constraints

Any system that selects and transmits imagery must incorporate security controls. Encrypting frames in transit, authenticating API endpoints, and minimizing retention will be essential for compliance. For edge deployments, bandwidth constraints may limit the number and resolution of captured frames; StaticCameraManager’s focus on selecting higher-quality frames can reduce data movement by favoring fewer but more informative captures.

From a developer perspective, integrating the manager into existing CI pipelines means providing robust unit tests for scoring logic, deterministic behavior under mocked physics, and profiling hooks to detect performance regressions.

How StaticCameraManager complements existing ecosystems

StaticCameraManager is not a replacement for camera calibration, dataset pipelines, or VLM training tools—it complements them. It sits upstream of visual models and can be incorporated into synthetic data generators, annotation tools, and model evaluation suites. The scoring engine produces higher-signal data for systems like pose estimators, scene graph generators, and activity recognition models, while being agnostic to the specific AI stacks (cloud inference endpoints, on-device neural nets, or hybrid systems).

Teams working with marketing analytics platforms or CRM-integrated systems that incorporate behavioral signals can benefit from better-quality capture without increasing capture volume. Likewise, developer tools that focus on scene composition and camera rigs can expose StaticCameraManager tuning knobs—weight sliders, golden-range parameters, occlusion sampling density—to non-expert users.

A forward-looking paragraph about where this leads

As capture systems increasingly feed into multimodal AI—where visual inputs combine with audio, sensor telemetry, and language models—the importance of deterministic, quality-focused viewpoint selection will grow. StaticCameraManager’s scoring approach can evolve into adaptive, task-aware policies that learn which viewpoints historically produce the most predictive features for specific models, enabling more efficient on-device processing, more privacy-preserving data collection, and lower operational costs. Continued work on graded occlusion, multi-view fusion, and predictive pre-positioning will make these systems more robust in unconstrained environments, helping bridge simulation and real-world deployment for both research and commercial applications.