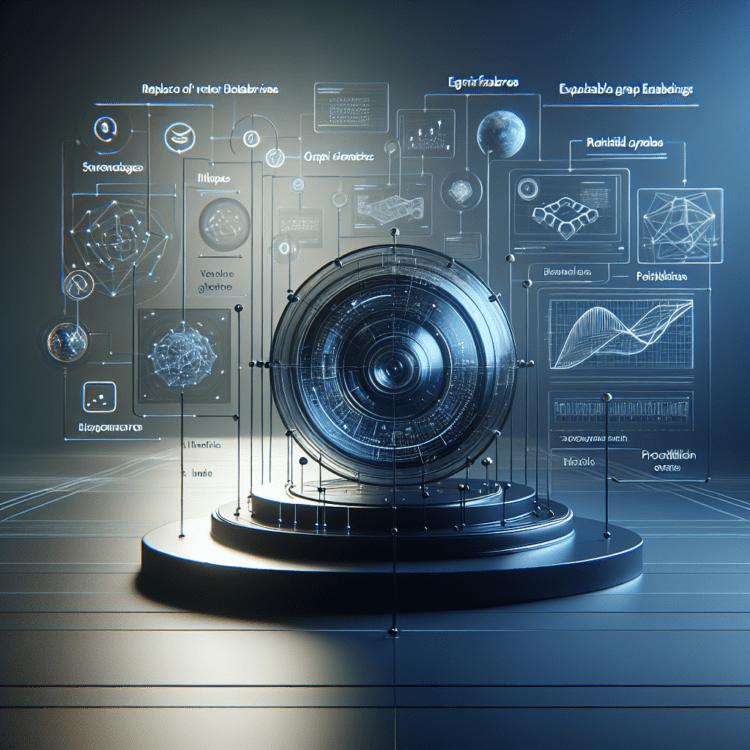

Terraphim’s knowledge‑graph approach delivers sub‑millisecond, explainable graph embeddings for on‑device AI

Terraphim combines a knowledge graph and an Aho‑Corasick automaton to deliver deterministic, explainable, sub‑millisecond graph embeddings for on‑device AI.

A different take on graph embeddings and context for AI agents

Terraphim replaces the common vector‑database pattern with a compact knowledge‑graph representation and a single automaton for matching. Rather than computing dense-vector distances on every query, it represents concepts as nodes connected by labeled edges and resolves synonyms through a prebuilt Aho‑Corasick automaton. The result is a deterministic matching pipeline that emphasizes traceability and low latency — properties the project argues are crucial when you must explain why a result was returned or meet tight on‑device latency budgets.

How Terraphim performs at small scale

The project publishes three reproducible performance figures that frame its engineering tradeoffs and target use cases. First, a working set that includes multiple role graphs — for example operator, engineer, and analyst vocabularies — can match 1.4 million patterns in under one millisecond while using less than 4 GB of RAM. Second, once the automaton is constructed, each knowledge‑graph inference step runs in the single‑digit nanoseconds range (reported as 5–10 ns per step), because traversal becomes a compact loop over bytes and edge lists. Third, rebuilding a role’s embeddings from source — adding, renaming, or removing synonyms — completes in around 20 milliseconds, which allows edits to take effect almost immediately during interactive workflows. For context, a typical vector nearest‑neighbour lookup is cited in the 5–50 ms range after the cost of obtaining embeddings (an API call often measured at 50–500 ms) and network round trips; Terraphim positions itself in a different latency regime.

Why deterministic matching changes guarantees you can make

One of the central claims for Terraphim is explainability. Because each match corresponds to a concrete synonym and a specific edge in the knowledge graph, every returned result can be traced back to the exact graph element that produced it. That traceability removes the “black‑box” answer of “the model said so” and provides a human‑auditable path from input to match — a capability the project highlights as essential in regulated domains such as healthcare, legal, finance, and government. In these environments the ability to enumerate which term matched, which role supplied the synonym, and how nodes linked to the result can be a regulatory requirement rather than a debugging convenience.

A workflow without retraining

Terraphim’s design eliminates the need for training, retraining, or fine‑tuning to incorporate new vocabulary. Adding or updating a concept is a text edit: you add a synonym, point the system at the file, and the role graph is rebuilt in milliseconds. There is no GPU training run, no scheduling of retraining jobs, and no embedding API costs tied to schema changes. For teams onboarding projects or iterating on domain‑specific terminology, this collapses a maintenance loop from days or weeks down to seconds and allows agent vocabularies to evolve interactively.

Language‑agnostic matching by design

Matching in Terraphim operates over normalized labels that you supply. The same graph node can carry labels in multiple languages — English, French, Russian, Mandarin — with no separate indices or per‑language models. Because the system only matches what is present in the graph, language detection and stop‑word lists are unnecessary: if a token has not been declared as a synonym, it simply does not match. That explicit approach gives project maintainers full control over what the matcher recognizes and reduces the complexity of maintaining multilingual indices.

Practical capabilities enabled by graph‑based context

Switching the matching primitive unlocks practical behaviors that are difficult to achieve with black‑box vector retrieval:

- Deterministic command rewriting: agent suggestions can be intercepted and rewritten according to graph matches. For example, an agent recommending npm install can be rewritten to bun install when the role graph maps those commands as synonyms, enabling safe, reproducible transformations of generated text.

- Persistent corrections: when an agent is corrected, the correction can be saved as a synonym so future sessions avoid repeating the same mistake. That allows a deployed agent to learn from edits without retraining.

- Small‑footprint, offline operation: the entire system can run inside a single 4 GB process on a laptop with no external network calls, making it feasible to serve context locally and meet strict privacy or latency constraints.

These capabilities are presented as concrete demonstrations of how the architecture changes what developers can build around AI agents and context management.

What the matching pipeline looks like in practice

At runtime, Terraphim uses a single automaton constructed from the supplied synonym set. Queries are processed in time proportional to the input length plus the number of matches — described by the O(n + m + z) bound that characterizes the Aho‑Corasick algorithm — and then routed into the graph for edge‑based ranking. Because the automaton is built once and reused across queries, per‑query cost is dominated by a tight byte‑slice walk and graph edge traversals, which is why per‑step costs shrink to the nanosecond scale on modern CPUs. The documentation and reference material describe the automaton, ranking formula, and an ASCII walk‑through of the traversal to explain the data structures and implementation choices in detail.

Who should consider this approach and why

Terraphim’s model targets scenarios where explainability, determinism, low latency, or offline operation are primary requirements. Examples include:

- On‑device AI assistants and coding agents that must run without network access.

- Regulated applications that need auditable decisions for compliance.

- Teams wanting rapid iteration on domain vocabulary without retraining cycles.

- Multilingual projects that prefer explicit synonym control over language detection pipelines.

By contrast, the maintainers acknowledge that vector databases and embedding APIs remain widely used and appropriate for many semantic search problems; Terraphim is positioned as an engineered alternative for the subset of problems where its tradeoffs are decisive.

Integration patterns and developer workflow

The project provides practical how‑tos for integrating the matcher into application pipelines. A command‑rewriting how‑to walks through where to place synonyms, how role graphs are assembled, and how hooks call the matcher to intercept and transform agent outputs. The reference documentation details the automaton construction, ranking mechanisms, and example data structures so engineers can reproduce the matching behavior in their systems. Because role graphs can be rebuilt in roughly 20 milliseconds, the recommended development flow emphasizes live edits to synonym files and immediate validation in the target application.

Evidence and reproducible measurements

The performance claims in the source are presented as reproducible on a laptop: a 1.4 million pattern match under one millisecond using less than 4 GB of RAM; 5–10 nanoseconds per graph inference step after automaton construction; and a 20 ms rebuild time per role. The authors contrast these figures with a typical vector‑DB nearest‑neighbour latency (reported as 5–50 ms) plus the time and network cost to obtain embeddings (reported as 50–500 ms for an embedding API call and round‑trip). Those comparisons are used to argue that Terraphim occupies a distinct latency and operational envelope.

Broader implications for tools, platforms, and businesses

If adopted more widely, this pattern — explicit knowledge graphs plus fast automata matching — would shift some design decisions that are currently default in the AI tooling ecosystem. For platforms and developer tools, it suggests the possibility of shipping contextual systems that are auditable by design and that scale horizontally across use cases without heavy model retraining. For businesses, the approach could reduce dependency on embedding API costs and GPU infrastructure when the core requirement is traceable matching rather than semantic generalization. For security and compliance teams, the deterministic provenance of matches simplifies post‑hoc analysis compared with vector‑based retrieval where similarity scores lack a direct mapping to specific input tokens or declared synonyms.

At the same time, the team frames this as a complementary answer rather than a universal replacement: vector search remains valuable where approximate semantic similarity and model‑derived generalization are primary; graph‑based matching is presented as the engineered choice for explainability‑first, low‑latency, and on‑device scenarios.

Practical limitations and considerations to watch for

The published material emphasizes properties and measurements that support specific design goals; it does not claim universality. The graph approach relies on curated synonym sets and explicitly supplied labels, which gives operators precise control over matching but also places the onus on teams to maintain those synonym files and role graphs. Because the system only matches what has been declared, its recall is bounded by the coverage of those resources; teams must therefore adopt workflows that keep synonyms and edges up to date if they want comprehensive matches across evolving domains.

Resources, next steps, and ecosystem touchpoints

For teams that want to trial the approach, the project’s how‑to on command rewriting is intended as a practical integration guide, and the Graph Embeddings reference documents the automaton, ranking formula, and data structures. The maintainers have also signaled a promotional series that includes a detailed sub‑millisecond implementation article covering the finite‑state transducer and Aho‑Corasick work, and a book titled Context Engineering with Knowledge Graphs slated to launch in May. The community is encouraged to share experiments and integrations on the project’s Discourse forum.

Terraphim’s design demonstrates a deliberate engineering trade: favor explicit, auditable matching and rapid edit cycles over probabilistic, model‑inferred similarity. For teams that need deterministic behavior, strict latency budgets, or local execution without network dependencies, the knowledge‑graph plus automaton pattern offers a distinct, reproducible toolkit that changes how context for AI agents is built and governed.

Looking ahead, this work points toward a hybrid future in which explicit, graph‑based context layers and learned embedding models coexist and are selected based on application requirements. As projects adopt finer‑grained explainability and stronger on‑device guarantees, expect toolchains, CI workflows, and developer documentation to evolve around fast rebuild loops and curated synonym management rather than retrain cycles alone — a shift that will alter how teams ship, audit, and iterate on contextual behavior for AI systems.